Prototype

GenAI Medical Assistant — RAG Platform (Safety + OCR Intake)

Reliable AI assistant chatbot: grounded answers, eval scripts, and approval workflow

Role

Solo Developer

Type

AI Platform (Web + Backend)

Stack

Ollama (Local LLM)ChromaDB (Vector DB)Docker ComposeGitHub ActionsOCR PipelineFastAPIPythonRAG (Retrieval-Augmented Generation)Next.js

Overview

A production-style GenAI assistant that answers using retrieval-grounded context rather than guessing. I built a full pipeline: document intake (OCR + scan quality scoring), review/approval workflow, RAG retrieval, and a deterministic safety/triage layer. The system is containerized with Docker Compose and backed by CI (lint/test/build) to keep it reproducible and deployable.

Key Features

- Retrieval-grounded answers (RAG) to reduce hallucinations

- Deterministic safety + triage layer before responding

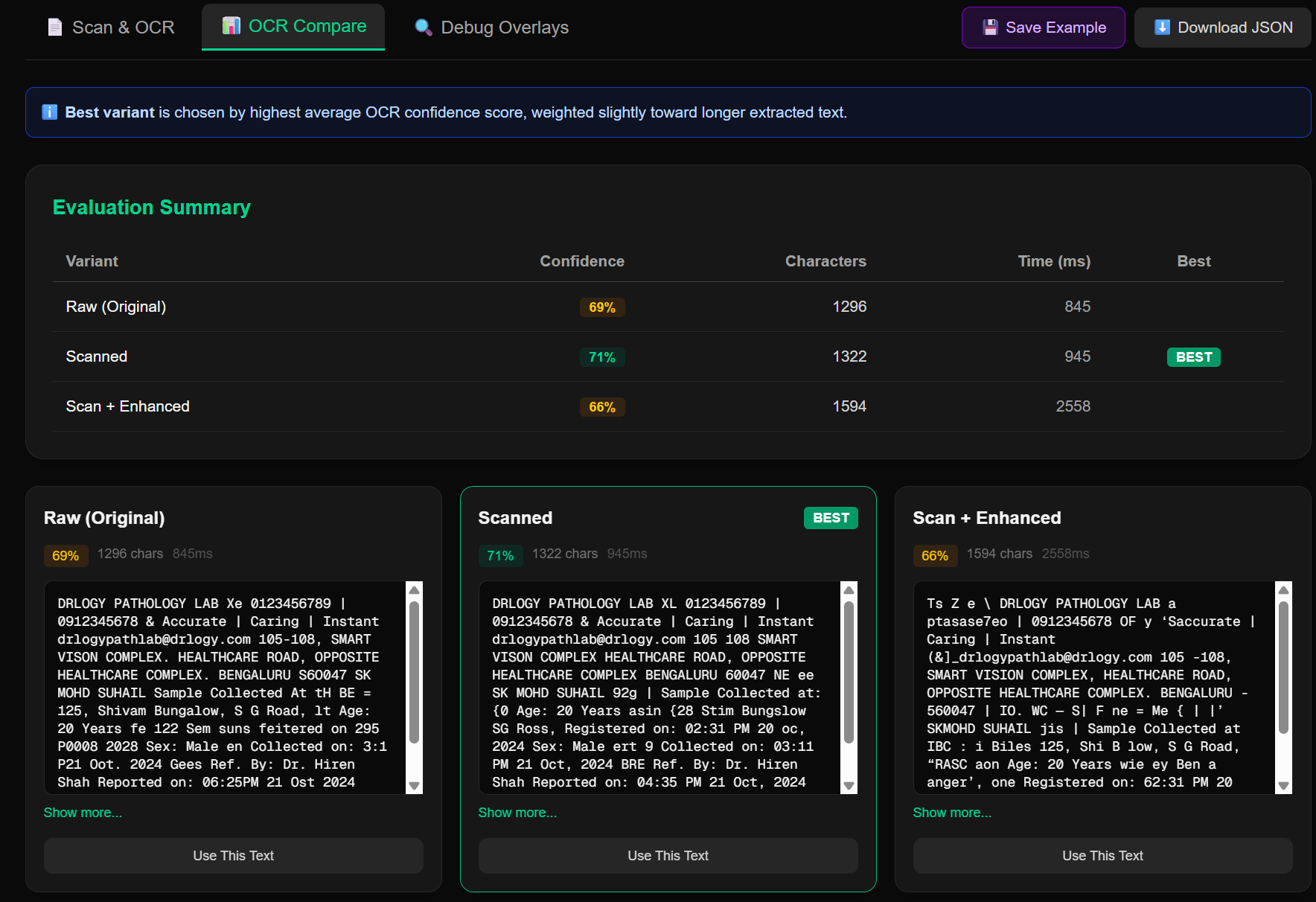

- OCR document intake with scan-quality scoring

- Review/approve workflow for document sources

- Evaluation scripts (grounded vs non-grounded comparisons)

- Docker Compose orchestration with health checks

- CI pipeline (lint/test/build) for reproducible deployments

Challenges & Learnings

- Designing safety rules that are predictable (not model-mood based)

- Making the stack reproducible across machines with containers

My Contributions

- • Designed end-to-end architecture (frontend, API, retrieval, storage)

- • Implemented OCR ingestion + scoring + approval workflow

- • Built retrieval pipeline and response assembly logic

- • Added deterministic safety/triage checks

- • Containerized services with Docker Compose + health checks

- • Added GitHub Actions CI for lint/test/build gates

- • Wrote evaluation scripts to compare grounded vs non-grounded outputs